Introduction

Forget drones, FinTech and (especially) apps that claim to be ‘digitally disruptive’. The big tech trends that will have a significant impact on the IT and business world in 2016 and beyond range from machine learning and neuromorphic computing architectures to a growth in sensory and contextual information and more ambient user experiences. Could 2016 be the tipping point when the physical and virtual worlds finally merge?

Information of Everything

It’s chaos out there. Smart devices of all kinds are producing and sending text, audio, video, sensory and contextual information.

“The Information of Everything addresses this influx with strategies and technologies to link data from all these different data sources,” say analysts at IT research company Gartner. “Information has always existed everywhere, but has often been isolated, incomplete, unavailable or unintelligible … advances in semantic tools such as graph databases, as well as other emerging data classification and information analysis techniques, will bring meaning to the often chaotic deluge of information.”

Whether you call it the Internet of Things, the Internet of Data or the Information of Everything, we know one thing – 2016 will produce more and more big data. It’s what we do with it that could change.

The device mesh

Forget the smartphone – that’s just the start. The era of the device mesh means accessing information and apps via all kinds of devices, from phones, watches and wearables to smart TVs, sensors in homes and even the dashboard in a car.

“The device mesh is innately part of the Internet of Things … even apps like Waze are part of this trend, turning cars into live traffic data,” says Mike Crooks, Head of Innovation at Mubaloo Innovation Lab. “The device mesh is the trend of moving to the interconnected ideal of the Internet of Things.”

3D printing

Some think there’s been a lot of hype around 3D printing, but it remains a major growth area with vast potential. As the materials that can be 3D printed increase, so do the practical applications for 3D printers, with aerospace, medical, automotive, energy and the military all destined to benefit.

“Open hardware and democratised production methods like 3D printing can be seen as ushering in a third industrial age,” says Dr Kevin Curran, Technical Expert at the IEEE, who nevertheless sees food printing as the imminent trend for 2016. ChefJet can print in chocolate and sugar, Choc Edge creates on 2D/3D chocolate decorations, ChocoByte prints custom 3D solid chocolate bars, and Natural Machines’ Foodini can print both pasta and pizza.

A major research area is bioprinting, where food is printed dot by dot to build up edible meals. “The aim is to create a range of food inks, basically substances that form gels with water, and the Holy Grail is to bring food to life from nothing,” he says. The mission, as with all 3D printing, is to eliminate the production chain for food.

Ambient user experiences

Here we’re talking about a long-term future of immersive environments with augmented and virtual reality, but for now it’s about continuity between devices and location.

“Hyper-location technologies are key to delivering an ambient user experience that is also giving rise to the idea of slippy UX,” says Crooks, who thinks that mobile is becoming more about short, fast interactions with minimal user input.

“It’s different from the simple sensor-based apps on smartphones today,” says Curran. “Instead of the user having to go and look for something like hotels, the device would already know what kind of hotel they are looking for based on what hotels they have picked in the past.”

Context comes from both human and physical elements – the former is emotional state, habits, interests, group dynamics, social interactions and colocation of others, present tasks, and general goals, while the latter is the user’s absolute position, relative position, light, pressure, noise and atmosphere of the area.

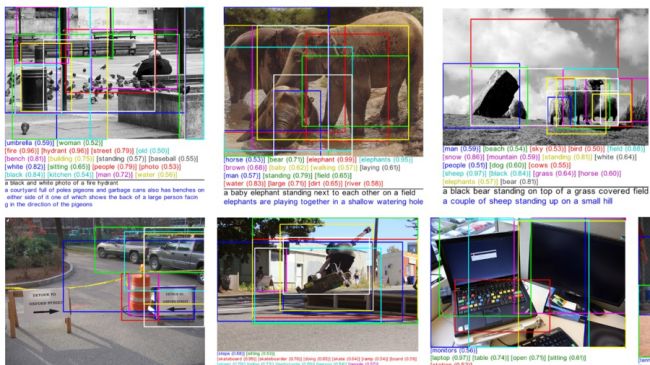

Advanced machine learning

Another tech trend for 2016 and beyond – and tied up with the Information of Everything – is advanced machine learning, where computers automate data processing by learning and adapting. The end result is artificial intelligence. Handling complex datasets requires deep neural nets (DNNs) that allow computers to both act autonomously and perceive the world on their own.

“DNNs are what makes smart machines appear intelligent,” according to analysts at Gartner. “DNNs enable hardware or software-based machines to learn for themselves all the features in their environment, from the finest details to broad sweeping abstract classes of content.”

In this fast evolving area, businesses that understand how to use advanced machine learning can get a huge competitive advantage.

Virtual assistants

Hardware is dead – it’s software that’s increasingly the agent of change, so instead think of autonomous vehicles, virtual personal assistants and smart advisors as the future faces of tech. Google Now, Cortana, Alexa and Siri are just the beginning.

“Over the next five years we will evolve to a post-app world with intelligent agents delivering dynamic and contextual actions and interfaces,” says David Cearley, vice president and Gartner Fellow. “IT leaders should explore how they can use autonomous things and agents to augment human activity and free people for work that only people can do. However, they must recognise that smart agents and things are a long-term phenomenon that will continually evolve and expand their uses for the next 20 years.”

Adaptive security architecture

With more digital businesses come more hackers, and with the birth of a new threat landscape we’re seeing the death of antivirus software. “The Volatile Cedar malware takes great strides to remain under the radar of leading antivirus solutions,” says Curran, offering an example of why we need adaptive security architectures.

As well as a ‘stealth mode’ where it lies dormant to evade detection, Volatile Cedar is capable of sophisticated monitoring of system processes as well as a custom-built remote access Trojan.

“Techniques to avoid detection include frequently checking antivirus results and changing versions and builds on all infected servers when any traces of detection appear,” says Curran. Cloud-based services and open APIs only make the demand for adaptive security higher.

“Application self-protection, as well as user and entity behaviour analytics, will help fulfil the adaptive security architecture,” says Gartner.

Bluetooth beacons

Bluetooth-powered beacons – AKA ‘lighthouses’ – are now being installed in shopping malls, museums, hotels, airports and even offices that can track the exact location of a smartphone or smartwatch wearer, and send them real-time notifications.

So far it’s been rather lamely talked up as a way of texting vouchers to passing shoppers, but beyond mobile commerce the spread of intelligent, wireless Bluetooth beacon hardware also means indoor mapping and much, much more.

“We believe that Bluetooth 4.2 beacons will become one of the big trends for next year, taking the technology from outside of the marketing sphere and into the Internet of Things sphere,” says Crooks. Think notifications of gate changes and train delays at airports and train stations, hands-free payments, indoor mapping and multi-room music that follows you around your home.

At any rate, Bluetooth beacons are about to emerge as the links within, and between, the Internet of Things, the smart city and the smart home.

IoT platforms

Not a day goes by without some company or other stating that it’s the best platform yet for the Internet of Things. It’s easy to get cynical when so many are jostling to become ‘the one’, but IoT devices will have an increasing need for management, security and integration.

“IoT platforms constitute the work IT does behind the scenes from an architectural and a technology standpoint to make the IoT a reality,” says Gartner. “The IoT is an integral part of the digital mesh and the ambient user experience, and the emerging and dynamic world of IoT platforms is what makes them possible.”

But standardisation? Yeah, right. “Any enterprise embracing the IoT will need to develop an IoT platform strategy, but incomplete competing vendor approaches will make standardisation difficult through 2018,” says Cearley.

Advanced system architecture

With the digital mesh and smart machines about to go ballistic, computing is about to get intense. Cue the latest power-play – ultra-efficient neuromorphic architectures underpinned by field-programmable gate arrays (FPGAs), which should allow computers to run at speeds of greater than a teraflop.

“Systems built on GPUs and FPGAs will function more like human brains that are particularly suited to be applied to deep learning and other pattern-matching algorithms that smart machines use,” says Cearley. “FPGA-based architecture will allow further distribution of algorithms into smaller form factors, with considerably less electrical power in the device mesh.”

The result? Advanced machine learning will be present anywhere that Internet of Things devices hang out – such as in homes, in cars, on wristwatches… and even in humans.

Source: TechRadar